Edge Delta HTTP Source

22 minute read

Overview

The HTTP source node directly receives log data from applications that transmit logs over HTTP, which is a common method for centralized log collection, especially in microservice architectures and event-driven architectures.

Use the configuration wizard below to generate a starter YAML configuration.

Note: Customers with HTTP source, TCP source or UDP source nodes should not use or update to Agent version v0.1.97.

This node requires Edge Delta agent version v0.1.70 or higher.

Note: As of v2.11.0, the HTTP input correctly splits newline-delimited JSON (NDJSON) into individual messages instead of treating them as a single message.

AI Team: Configure this source using the HTTPS connector for streamlined setup in AI Team.

- outgoing_data_types: log

Example Configuration

nodes:

- name: my_http_input

type: http_input

port: 3421

log_parsing_mode: full

read_timeout: 10s

included_paths:

- /v1/.*

authentication:

strategy: Bearer

secret: "testXYZ"

Required Parameters

name

A descriptive name for the node. This is the name that will appear in pipeline builder and you can reference this node in the YAML using the name. It must be unique across all nodes. It is a YAML list element so it begins with a - and a space followed by the string. It is a required parameter for all nodes.

nodes:

- name: <node name>

type: <node type>

type: http_input

The type parameter specifies the type of node being configured. It is specified as a string from a closed list of node types. It is a required parameter.

nodes:

- name: <node name>

type: <node type>

port

Enter the port number that the http_input type node should listen on. It is specified as an integer and is a required parameter.

nodes:

- name: <node name>

type: http_input

port: <port number>

Optional Parameters

disabled

The disabled parameter disables the node in the pipeline. When set to true, the agent ignores the node at startup. You can toggle nodes on or off in the pipeline builder. It is specified as a boolean, defaults to false, and is optional.

nodes:

- name: <node name>

type: <node type>

disabled: true

included_paths

The included_paths parameter is used to filter incoming traffic to only accept requests from specified paths. This helps reduce input noise and enables routing to specific HTTP input nodes. It is specified as one or more regex patterns and is an optional parameter. When not specified, the HTTP input will accept requests from all paths.

nodes:

- name: http_input

type: http_input

port: 3421

read_timeout: 10s

included_paths:

- /v1/.*

Cloud Fleet Configuration

When using HTTP input nodes in Cloud Fleets, the included_paths parameter is critical for configuring multiple HTTP inputs:

Important Cloud Fleet Constraints:

- Cloud Fleets support port 80 for HTTP traffic and port 443 for HTTPS/TLS traffic

- Custom ports (e.g., 3421, 8080) cannot be used

- When configuring multiple HTTP inputs on the same port, you must use path filtering with

included_pathsto separate traffic - Without

included_pathsfilters, all HTTP input nodes will receive all incoming data, causing duplication - If you only have one HTTP input node,

included_pathsis optional and the node will accept all paths

Multiple HTTP Inputs Example:

In this example, three HTTP input nodes all use the same port (80 for HTTP, but could be 443 for HTTPS/TLS) and each accepts different paths. The first node only accepts paths starting with /service-a/, the second only accepts /service-b/ paths, and the third handles /metrics/ paths.

nodes:

- name: service_a_input

type: http_input

port: 80

included_paths:

- /service-a/.*

- name: service_b_input

type: http_input

port: 80

included_paths:

- /service-b/.*

- name: metrics_input

type: http_input

port: 80

included_paths:

- /metrics/.*

Path Filtering Notes:

- Patterns are regular expressions (e.g.,

/v1/.*matches/v1/logs,/v1/metrics) - Each HTTP input node should have unique, non-overlapping path patterns

- Requests not matching any

included_pathspattern will be rejected

For troubleshooting Cloud Fleet HTTP input issues, see the Cloud Pipelines Troubleshooting Guide.

excluded_paths

Minimum Agent Version: v2.11.0

The excluded_paths parameter allows you to exclude specific paths from processing, even when they match the included_paths patterns. This is useful when you want to accept most paths with a broad pattern but exclude specific endpoints. Excluded paths take precedence over included paths, meaning if a request matches both an included path and an excluded path, it will be rejected.

It is specified as one or more regex patterns and is an optional parameter.

nodes:

- name: http_input

type: http_input

port: 3421

read_timeout: 10s

included_paths:

- /v1/.*

excluded_paths:

- /v1/health

- /v1/metrics

In this example, the node accepts all paths starting with /v1/ except for /v1/health and /v1/metrics, which are excluded.

Combining included_paths and excluded_paths:

| included_paths | excluded_paths | Request Path | Result |

|---|---|---|---|

/v1/.* | /v1/health | /v1/logs | Accepted |

/v1/.* | /v1/health | /v1/health | Rejected |

/v1/.* | /v1/apps/.* | /v1/apps/test | Rejected |

| Not specified | /internal/.* | /logs | Accepted |

| Not specified | /internal/.* | /internal/debug | Rejected |

Use Cases:

- Exclude health check endpoints from log processing while accepting all other API paths

- Filter out noisy internal endpoints while using broad path matching

- Exclude metrics endpoints that should be handled by a different pipeline

log_parsing_mode

The log_parsing_mode parameter controls how incoming HTTP request bodies are processed and split into individual log entries. This is particularly important when receiving JSON payloads that contain embedded newlines, which could previously cause a single JSON object to be incorrectly split into multiple log entries.

Available options:

full(default) - Auto-detects JSON based on Content-Type header and payload structurejson- Forces JSON parsing, handling embedded newlines within JSON stringsbasic- Processes as newline-delimited logs (original behavior, splits on newlines)

When to use each mode:

full mode (recommended):

- Default behavior that handles most use cases automatically

- Detects JSON based on Content-Type header (

application/json) - Falls back to line processing for non-JSON content

- Best choice when receiving mixed content types

json mode:

- Use when you know all incoming data will be JSON

- Prevents JSON objects with embedded newlines from being split incorrectly

- Handles single JSON objects, JSON arrays, and NDJSON (newline-delimited JSON)

- Falls back to line processing if JSON parsing fails

basic mode:

- Use when receiving plain text logs with one log entry per line

- Each newline character creates a separate log entry

- Original HTTP input behavior before log_parsing_mode was introduced

nodes:

- name: http_input_json

type: http_input

port: 8080

log_parsing_mode: json # Parse as JSON, preserving embedded newlines

Example - Problem Solved by JSON Mode:

Before log_parsing_mode: json, a JSON payload with embedded newlines would be incorrectly split:

// Input JSON with embedded newlines:

{"id": "123", "message": "Line 1\nLine 2\nLine 3", "level": "INFO"}

// Without log_parsing_mode: json - INCORRECT (3 broken log entries):

{"id": "123", "message": "Line 1

Line 2

Line 3", "level": "INFO"}

// With log_parsing_mode: json - CORRECT (1 complete log entry):

{"id": "123", "message": "Line 1\nLine 2\nLine 3", "level": "INFO"}

Content-Type Header Detection:

When using full mode, the HTTP input detects JSON based on the Content-Type header:

# This request will be processed as JSON (auto-detected):

curl -X POST \

-H "Content-Type: application/json" \

-d '{"id": "123", "msg": "Log with\nnewlines"}' \

http://localhost:8080/logs

# This request will be processed as lines:

curl -X POST \

-H "Content-Type: text/plain" \

-d 'Line 1

Line 2

Line 3' \

http://localhost:8080/logs

Backward Compatibility:

The default full mode maintains backward compatibility with existing configurations. No changes are required for deployments that don’t send JSON with embedded newlines.

authentication

The authentication parameter defines the type of authentication. It is specified as a string. Basic and Bearer are supported. For Bearer authentication you specify a secret token. For Basic, you specify the username and password. Authentication is an optional parameter.

Bearer:

This field supports secret references for secure credential management. Instead of hardcoding sensitive values, you can reference a secret configured in your pipeline.

To use a secret in the GUI:

- Create a secret in your pipeline’s Settings > Secrets section (see Secrets)

- In this field, select the secret name from the dropdown list that appears

To use a secret in YAML:

Reference it using the syntax: '{{ SECRET secret-name }}'

Example:

field_name: '{{ SECRET my-credential }}'

Note: The secret reference must be enclosed in single quotes when using YAML. Secret values are encrypted at rest and resolved at runtime, ensuring no plaintext credentials appear in logs or API responses.

nodes:

- name: http_input

type: http_input

port: 3421

read_timeout: 10s

included_paths:

- /v1/.*

authentication:

strategy: Bearer

secret: "<your_bearer_token>"

Basic:

This field supports secret references for secure credential management. Instead of hardcoding sensitive values, you can reference a secret configured in your pipeline.

To use a secret in the GUI:

- Create a secret in your pipeline’s Settings > Secrets section (see Secrets)

- In this field, select the secret name from the dropdown list that appears

To use a secret in YAML:

Reference it using the syntax: '{{ SECRET secret-name }}'

Example:

field_name: '{{ SECRET my-credential }}'

Note: The secret reference must be enclosed in single quotes when using YAML. Secret values are encrypted at rest and resolved at runtime, ensuring no plaintext credentials appear in logs or API responses.

- name: my_http_input

type: http_input

port: 8080

included_paths:

- /v1/.*

authentication:

strategy: Basic

username: <username>

password: <password>

listen

The listen parameter is used to specify the address to listen to for incoming traffic. It is specified as a string and it is optional.

nodes:

- name: <node name>

type: http_input

port: <port number>

listen: <host>

rate_limit

The rate_limit parameter enables you to control data ingestion based on system resource usage. This advanced setting helps prevent source nodes from overwhelming the agent by automatically throttling or stopping data collection when CPU or memory thresholds are exceeded.

Use rate limiting to prevent runaway log collection from overwhelming the agent in high-volume sources, protect agent stability in resource-constrained environments with limited CPU/memory, automatically throttle during bursty traffic patterns, and ensure fair resource allocation across source nodes in multi-tenant deployments.

When rate limiting triggers, pull-based sources (File, S3, HTTP Pull) stop fetching new data, push-based sources (HTTP, TCP, UDP, OTLP) reject incoming data, and stream-based sources (Kafka, Pub/Sub) pause consumption. Rate limiting operates at the source node level, where each source with rate limiting enabled independently monitors and enforces its own thresholds.

Rate limiting vs backpressure: Rate limiting and backpressure are different mechanisms. Rate limiting controls data ingestion at the source based on CPU or memory thresholds. Backpressure controls data output at the destination when in-memory queues fill up. Backpressure logs such as

backpressure: dataCh at 92% capacitydo not appear when rate limiting triggers, and rate limiting logs do not appear during backpressure events.

Observability: When rate limiting is active in enforce mode, the agent does not produce INFO, WARN, or ERROR level logs. To confirm that rate limiting is triggering, check the source node’s Bytes I/O metrics in the Edge Delta app for gaps or drops that coincide with expected throttling periods.

Configuration Steps:

- Click Add New in the Rate Limit section

- Click Add New for Evaluation Policy

- Select Policy Type:

- CPU Usage: Monitors CPU consumption and rate limits when usage exceeds defined thresholds. Use for CPU-intensive sources like file parsing or complex transformations.

- Memory Usage: Monitors memory consumption and rate limits when usage exceeds defined thresholds. Use for memory-intensive sources like large message buffers or caching.

- AND (composite): Combines multiple sub-policies with AND logic. All sub-policies must be true simultaneously to trigger rate limiting. Use when you want conservative rate limiting (both CPU and memory must be high).

- OR (composite): Combines multiple sub-policies with OR logic. Any sub-policy can trigger rate limiting. Use when you want aggressive rate limiting (either CPU or memory being high triggers).

- Select Evaluation Mode. Choose how the policy behaves when thresholds are exceeded:

- Enforce (default): Actively applies rate limiting when thresholds are met. Pull-based sources (File, S3, HTTP Pull) stop fetching new data, push-based sources (HTTP, TCP, UDP, OTLP) reject incoming data, and stream-based sources (Kafka, Pub/Sub) pause consumption. Use in production to protect agent resources.

- Monitor: Logs when rate limiting would occur without actually limiting data flow. Use for testing thresholds before enforcing them in production.

- Passthrough: Disables rate limiting entirely while keeping the configuration in place. Use to temporarily disable rate limiting without removing configuration.

- Set Absolute Limits and Relative Limits (for CPU Usage and Memory Usage policies)

Note: If you specify both absolute and relative limits, the system evaluates both conditions and rate limiting triggers when either condition is met (OR logic). For example, if you set absolute limit to

1.0CPU cores and relative limit to50%, rate limiting triggers when the source uses either 1 full core OR 50% of available CPU, whichever happens first.

For CPU Absolute Limits: Enter value in full core units:

0.1= one-tenth of a CPU core0.5= half a CPU core1.0= one full CPU core2.0= two full CPU cores

For CPU Relative Limits: Enter percentage of total available CPU (0-100):

50= 50% of available CPU75= 75% of available CPU85= 85% of available CPU

For Memory Absolute Limits: Enter value in bytes

104857600= 100Mi (100 × 1024 × 1024)536870912= 512Mi (512 × 1024 × 1024)1073741824= 1Gi (1 × 1024 × 1024 × 1024)

For Memory Relative Limits: Enter percentage of total available memory (0-100)

60= 60% of available memory75= 75% of available memory80= 80% of available memory

- Set Refresh Interval (for CPU Usage and Memory Usage policies). Specify how frequently the system checks resource usage:

- Recommended Values:

10sto30sfor most use cases5sto10sfor high-volume sources requiring quick response1mor higher for stable, low-volume sources

The system fetches current CPU/memory usage at the specified refresh interval and uses that value for evaluation until the next refresh. Shorter intervals provide more responsive rate limiting but incur slightly higher overhead, while longer intervals are more efficient but slower to react to sudden resource spikes.

The GUI generates YAML as follows:

# Simple CPU-based rate limiting

nodes:

- name: <node name>

type: <node type>

rate_limit:

evaluation_policy:

policy_type: cpu_usage

evaluation_mode: enforce

absolute_limit: 0.5 # Limit to half a CPU core

refresh_interval: 10s

# Simple memory-based rate limiting

nodes:

- name: <node name>

type: <node type>

rate_limit:

evaluation_policy:

policy_type: memory_usage

evaluation_mode: enforce

absolute_limit: 536870912 # 512Mi in bytes

refresh_interval: 30s

Composite Policies (AND / OR)

When using AND or OR policy types, you define sub-policies instead of limits. Sub-policies must be siblings (at the same level)—do not nest sub-policies within other sub-policies. Each sub-policy is independently evaluated, and the parent policy’s evaluation mode applies to the composite result.

- AND Logic: All sub-policies must evaluate to true at the same time to trigger rate limiting. Use when you want conservative rate limiting (limit only when CPU AND memory are both high).

- OR Logic: Any sub-policy evaluating to true triggers rate limiting. Use when you want aggressive protection (limit when either CPU OR memory is high).

Configuration Steps:

- Select AND (composite) or OR (composite) as the Policy Type

- Choose the Evaluation Mode (typically Enforce)

- Click Add New under Sub-Policies to add the first condition

- Configure the first sub-policy by selecting policy type (CPU Usage or Memory Usage), selecting evaluation mode, setting absolute and/or relative limits, and setting refresh interval

- In the parent policy (not within the child), click Add New again to add a sibling sub-policy

- Configure additional sub-policies following the same pattern

The GUI generates YAML as follows:

# AND composite policy - both CPU AND memory must exceed limits

nodes:

- name: <node name>

type: <node type>

rate_limit:

evaluation_policy:

policy_type: and

evaluation_mode: enforce

sub_policies:

# First sub-policy (sibling)

- policy_type: cpu_usage

evaluation_mode: enforce

absolute_limit: 0.75 # Limit to 75% of one core

refresh_interval: 15s

# Second sub-policy (sibling)

- policy_type: memory_usage

evaluation_mode: enforce

absolute_limit: 1073741824 # 1Gi in bytes

refresh_interval: 15s

# OR composite policy - either CPU OR memory can trigger

nodes:

- name: <node name>

type: <node type>

rate_limit:

evaluation_policy:

policy_type: or

evaluation_mode: enforce

sub_policies:

- policy_type: cpu_usage

evaluation_mode: enforce

relative_limit: 85 # 85% of available CPU

refresh_interval: 20s

- policy_type: memory_usage

evaluation_mode: enforce

relative_limit: 80 # 80% of available memory

refresh_interval: 20s

# Monitor mode for testing thresholds

nodes:

- name: <node name>

type: <node type>

rate_limit:

evaluation_policy:

policy_type: memory_usage

evaluation_mode: monitor # Only logs, doesn't limit

relative_limit: 70 # Test at 70% before enforcing

refresh_interval: 30s

read_timeout

The read_timeout parameter specifies how long to wait for incoming data before timing out the connection. It is specified as a duration string (e.g., 1m, 30s, 10s) and is required.

Default: 1m

nodes:

- name: <node name>

type: http_input

port: <port number>

read_timeout: 10s

compression

The compression parameter specifies the expected compression format of incoming request bodies. The HTTP input node will decompress the data accordingly before processing.

| Value | Description |

|---|---|

uncompressed | (Default) No compression, process request body as-is. |

gzip | Decompress request body using gzip. |

zstd | Decompress request body using Zstandard (zstd). |

snappy | Decompress request body using Snappy. |

It is specified as a string and is optional.

nodes:

- name: <node name>

type: http_input

port: <port number>

compression: gzip

channel_size

The channel_size parameter sets the size of the internal data channel buffer. It is specified as an integer, has a default of 1000, and is optional.

parallel_worker_count

The parallel_worker_count parameter sets the number of workers that process incoming data in parallel. It is specified as an integer, has a default of 2, and is optional.

source_metadata

This option is used to define which detected resources and attributes to add to each data item as it is ingested by Edge Delta. You can select:

- Required Only: This option includes the minimum required resources and attributes for Edge Delta to operate.

- Default: This option includes the required resources and attributes plus those selected by Edge Delta

- High: This option includes the required resources and attributes along with a larger selection of common optional fields.

- Custom: With this option selected, you can choose which attributes and resources to include. The required fields are selected by default and can’t be unchecked.

Based on your selection in the GUI, the source_metadata YAML is populated as two dictionaries (resource_attributes and attributes) with Boolean values.

See Choose Data Item Metadata for more information on selecting metadata.

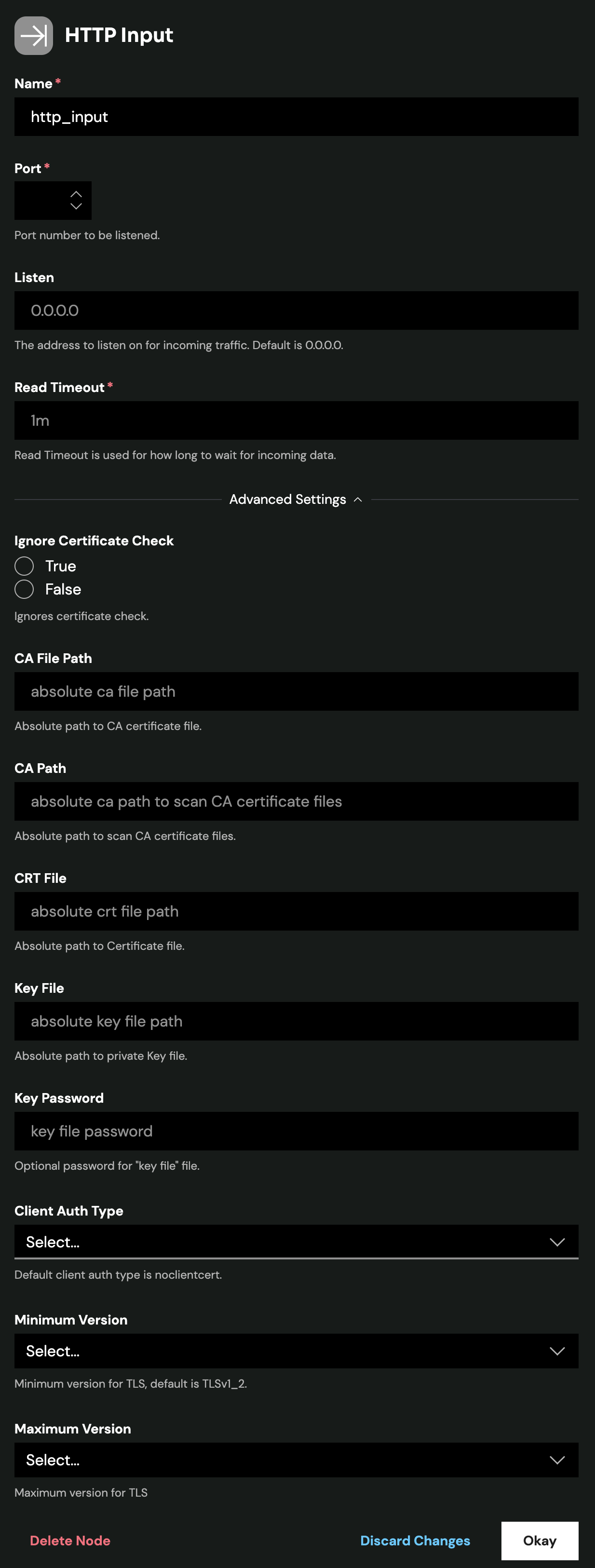

tls

Configure TLS settings for secure connections to this source. TLS is optional and typically used when the source node needs to accept encrypted connections or perform mutual TLS authentication with clients.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

<tls options>

CA Certificate File

Specifies the absolute path to a single CA (Certificate Authority) certificate file used to verify client certificates during mutual TLS authentication. This file contains the trusted CA certificate that signed the client certificates. Use this when you have a single CA certificate. (YAML parameter: ca_file)

When to use: Required when client_auth_type is set to verifyclientcertifgiven or requireandverifyclientcert. Choose either ca_file or ca_path, not both.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

ca_file: /certs/ca.pem

client_auth_type: requireandverifyclientcert

CA Certificate Path

Specifies a directory path containing one or more CA certificate files for client certificate verification. Use this when you need to trust multiple CAs or when managing CA certificates across multiple files. All certificate files in the directory will be loaded. (YAML parameter: ca_path)

When to use: Alternative to ca_file when you have multiple CA certificates. Required when client_auth_type is set to verifyclientcertifgiven or requireandverifyclientcert. Choose either ca_file or ca_path, not both.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

ca_path: /certs/ca-certificates/

client_auth_type: requireandverifyclientcert

Certificate File

Path to the server certificate file (public key) that will be presented to connecting clients during the TLS handshake. This certificate identifies the server and must match the private key. The certificate should be in PEM format and can include the full certificate chain. (YAML parameter: crt_file)

When to use: Required for TLS/HTTPS connections. Must be used together with key_file. Obtain this from your certificate authority or generate a self-signed certificate for testing.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

crt_file: /certs/server-cert.pem

key_file: /certs/server-key.pem

Private Key File

Path to the private key file corresponding to the server certificate. This key must match the public key in the certificate file and is used to decrypt communications encrypted with the public key. Keep this file secure and restrict access permissions. (YAML parameter: key_file)

When to use: Required for TLS/HTTPS connections. Must be used together with crt_file. If the key file is encrypted, also specify key_password.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

crt_file: /certs/server-cert.pem

key_file: /certs/server-key.pem

key_password: <password> # Only if key is encrypted

Private Key Password

Password (passphrase) used to decrypt an encrypted private key file. Only needed if your private key file is password-protected. If your key file is unencrypted, omit this parameter. (YAML parameter: key_password)

When to use: Optional. Only required if key_file is encrypted/password-protected. For enhanced security, use encrypted keys in production environments.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

crt_file: /certs/server-cert.pem

key_file: /certs/encrypted-server-key.pem

key_password: mySecurePassword123

Client Authentication Type

Controls how client certificates are requested and validated during the TLS handshake. This setting is specific to server-side TLS and determines the mutual TLS (mTLS) behavior. Use this to enforce certificate-based authentication from connecting clients for enhanced security. (YAML parameter: client_auth_type)

Valid options:

noclientcert- No client certificate is requested; any sent certificates are ignored. Use for standard TLS encryption without client authentication.requestclientcert- Client certificate is requested but not required. Useful for optional client authentication where you want to accept both authenticated and unauthenticated clients.requireanyclientcert- Client must send a certificate, but it is not validated against a CA. Use when you need proof a client has a certificate but don’t need to verify its authenticity.verifyclientcertifgiven- Certificate is requested but not required; if sent, it must be valid and signed by a trusted CA. Balances security with flexibility for mixed client environments.requireandverifyclientcert- Client must send a valid certificate signed by a trusted CA (full mutual TLS). Use for maximum security when all clients can be provisioned with certificates.

Default: noclientcert

When to use: Set to verifyclientcertifgiven or requireandverifyclientcert for secure environments where you need to verify client identity. When using certificate verification options, you must also configure ca_file or ca_path to specify the trusted CA certificates.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

client_auth_type: requireandverifyclientcert

ca_file: /certs/ca.pem # Required for certificate validation

Minimum TLS Version

Minimum TLS protocol version that will be accepted from connecting clients. This enforces a baseline security level by rejecting connections from clients using older, less secure TLS versions. (YAML parameter: min_version)

Available versions:

TLSv1_0- Deprecated, not recommended (security vulnerabilities)TLSv1_1- Deprecated, not recommended (security vulnerabilities)TLSv1_2- Recommended minimum for production (default)TLSv1_3- Most secure, use when all clients support it

Default: TLSv1_2

When to use: Set to TLSv1_2 or higher for production deployments. Only use TLSv1_0 or TLSv1_1 if you must support legacy clients that cannot upgrade, and be aware of the security risks. TLS 1.0 and 1.1 are officially deprecated and should be avoided.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

min_version: TLSv1_2

Maximum TLS Version

Maximum TLS protocol version that will be accepted from connecting clients. This is typically used to restrict newer TLS versions if compatibility issues arise with specific client implementations. (YAML parameter: max_version)

Available versions:

TLSv1_0TLSv1_1TLSv1_2TLSv1_3

When to use: Usually left unset to allow the most secure version available. Only set this if you encounter specific compatibility issues with TLS 1.3 clients, or for testing purposes. In most cases, you should allow the latest TLS version.

YAML Configuration Example:

nodes:

- name: <node name>

type: <source type>

tls:

max_version: TLSv1_3

max_connections

Minimum Agent Version: v2.13.0

The max_connections parameter sets the maximum number of concurrent connections the server accepts. The server rejects new connections when this limit is reached. Set to 0 for unlimited. It is specified as an integer and is optional. The default is 0 (unlimited).

max_connections_per_ip

Minimum Agent Version: v2.13.0

The max_connections_per_ip parameter sets the maximum concurrent connections from a single IP address. Set to 0 for unlimited. It is specified as an integer and is optional. The default is 0 (unlimited).

read_buffer_size

Minimum Agent Version: v2.13.0

The read_buffer_size parameter sets the per-connection buffer size for reading request data. This also controls the maximum header size. Increase this value for large requests or decrease it to save memory. It is specified as a size and is optional. The default is 16KB.

write_buffer_size

Minimum Agent Version: v2.13.0

The write_buffer_size parameter sets the per-connection buffer size for writing response data. Increase this value for large responses or decrease it to save memory. It is specified as a size and is optional. The default is 4KB.

truncate_limit

Minimum Agent Version: v2.13.0

The truncate_limit parameter sets the maximum size for individual items. The server truncates items larger than this limit but smaller than drop_limit and records a metric in edgedelta_node_truncated_items. Set to nil or omit to disable truncation. It is specified as a size and is optional. The default is nil (disabled).

drop_limit

Minimum Agent Version: v2.13.0

The drop_limit parameter sets the maximum size for individual items. The server drops items larger than this limit and records a metric in edgedelta_node_dropped_items. When you set both limits, drop_limit must be greater than or equal to truncate_limit. It is specified as a size and is optional. The default is 128MB.

idle_timeout

The idle_timeout parameter sets how long the server keeps an idle connection open before closing it. It is specified as a duration and is optional. The default is 1m.

write_timeout

The write_timeout parameter sets how long the server waits to finish writing a response before timing out. It is specified as a duration and is optional. The default is 10s.

reader_header_timeout

Deprecated in v2.13.0.

The reader_header_timeout parameter specifies how long the server waits to read request headers before timing out. It is specified as a duration and is optional. The default is 10s.

Testing an Endpoint

The following command can be used to test the input:

curl -X POST -d '{"json":"my log"}' <host>:<port>/<path>

The path can be any valid URL path. If included_paths is configured, the path must match one of the specified regex patterns. If included_paths is not configured, any path will be accepted.

Configuration Wizard

Use this interactive wizard to generate a starter configuration:

How will clients authenticate?

Choose an authentication method for incoming HTTP requests.