Edge Delta Hybrid Log Search

Deploy Edge Delta Hybrid Log Search to explore log archives in your environment using AWS and Kubernetes integrations.

6 minute read

Overview

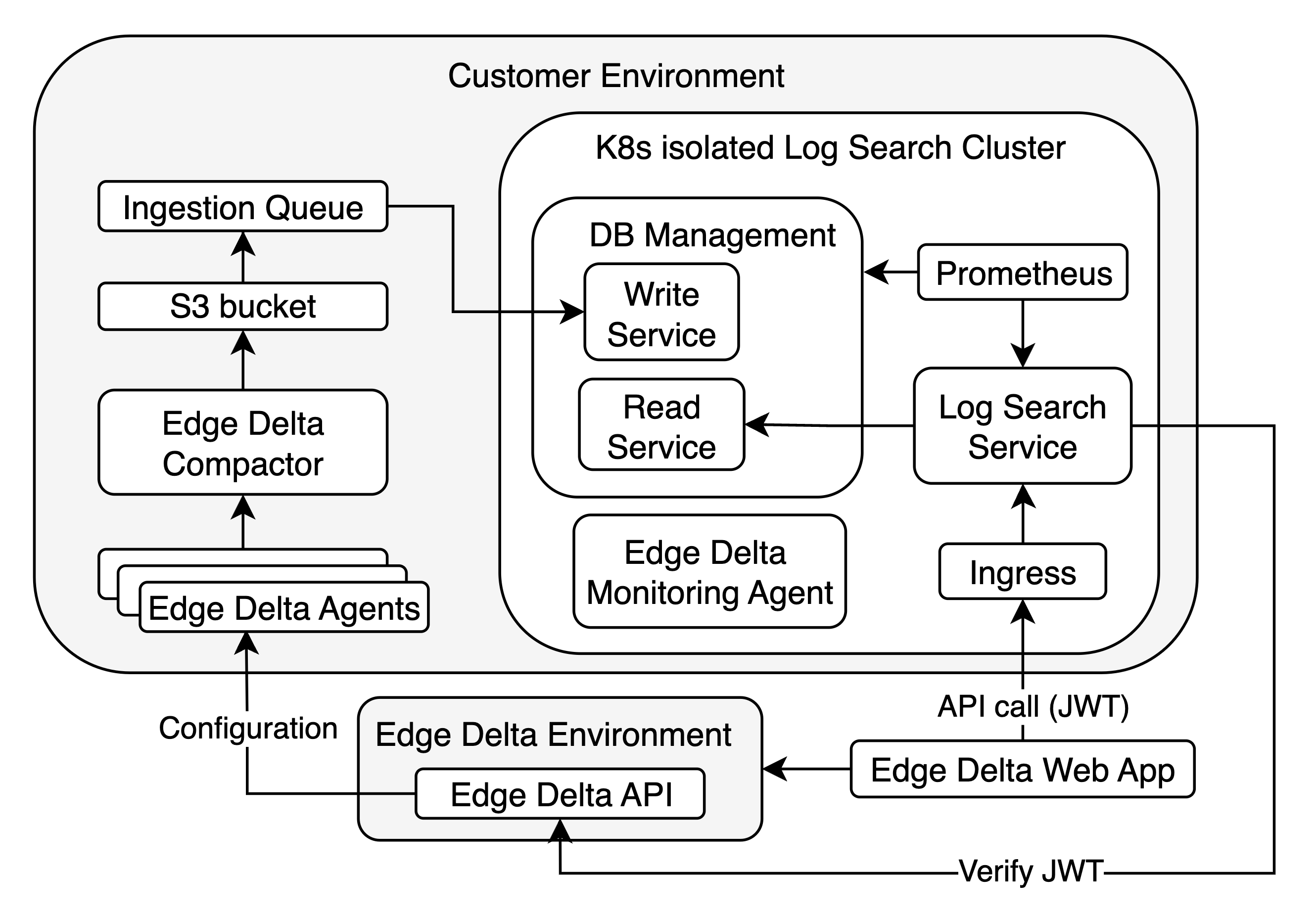

Hybrid log search is an Edge Delta implementation that enables you to use the Edge Delta web app to search archives hosted in your own environment.

In this implementation, two S3 buckets are deployed: one is used to store logs forwarded by the pipeline and the other is used for log search operations. The Edge Delta pipelines pull their configurations from the Edge Delta API (alternatively they can use local configMaps). The log search is performed with an API call by the Edge Delta web app to the log search service via ingress and using a JSON Web Token (JWT). The token is verified by the Log Search Service with the Edge Delta API.

Prerequisites

To deploy hybrid log search, you need a dedicated EKS cluster in your AWS account:

- EBS CSI Driver Addon must be enabled to manage persistent volumes. See AWS documentation here.

- OIDC Provider must be created to manage service account level permissions within the cluster. See AWS documentation here.

- Load Balancer Controller Addon must be installed to create ingress resources with a load balancer within the cluster. See AWS documentation here.

In addition, you must have the following tools installed:

- AWS Cli

- kubectl

- helm

- terraform

Finally, contact Edge Delta support to enable hybrid log search on your organization.

1. Prepare an API Key and S3 buckets

1.1 Create an API Key

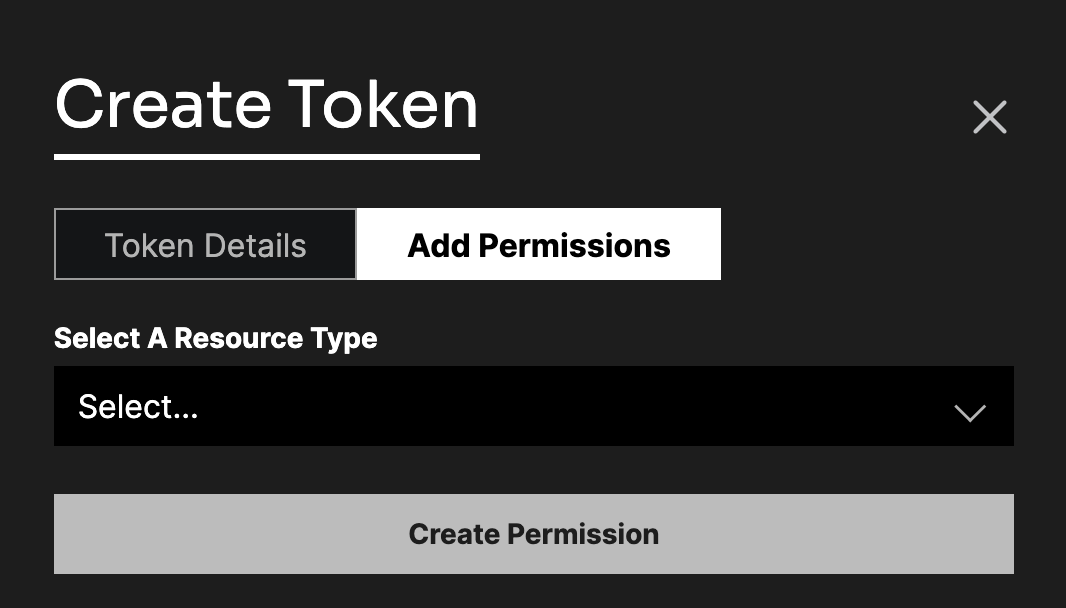

In this step you create an API key with isolated service permissions.

- Resource Type: Isolated Service

- Resources: All Current and Future Isolated Service

- Access Type: Read

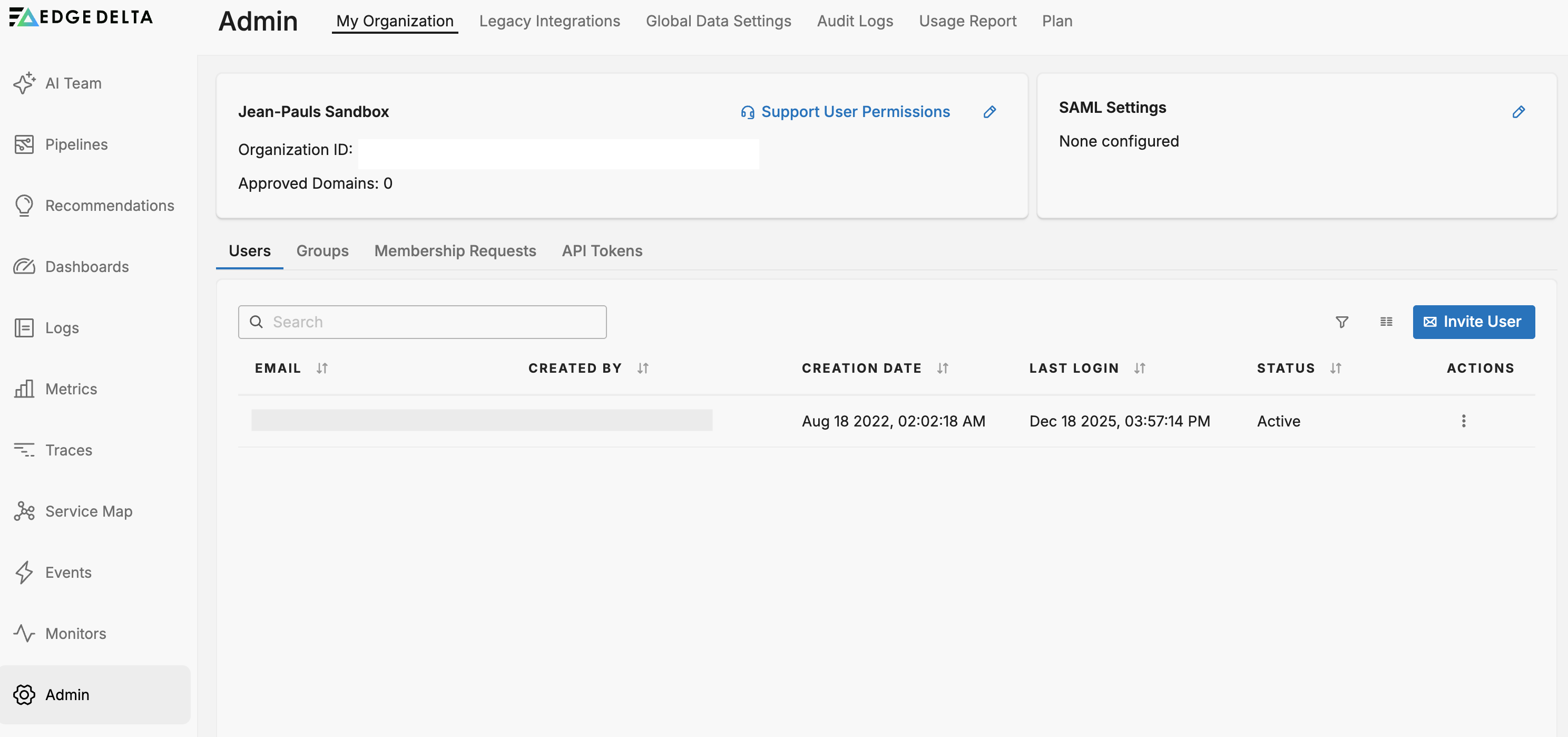

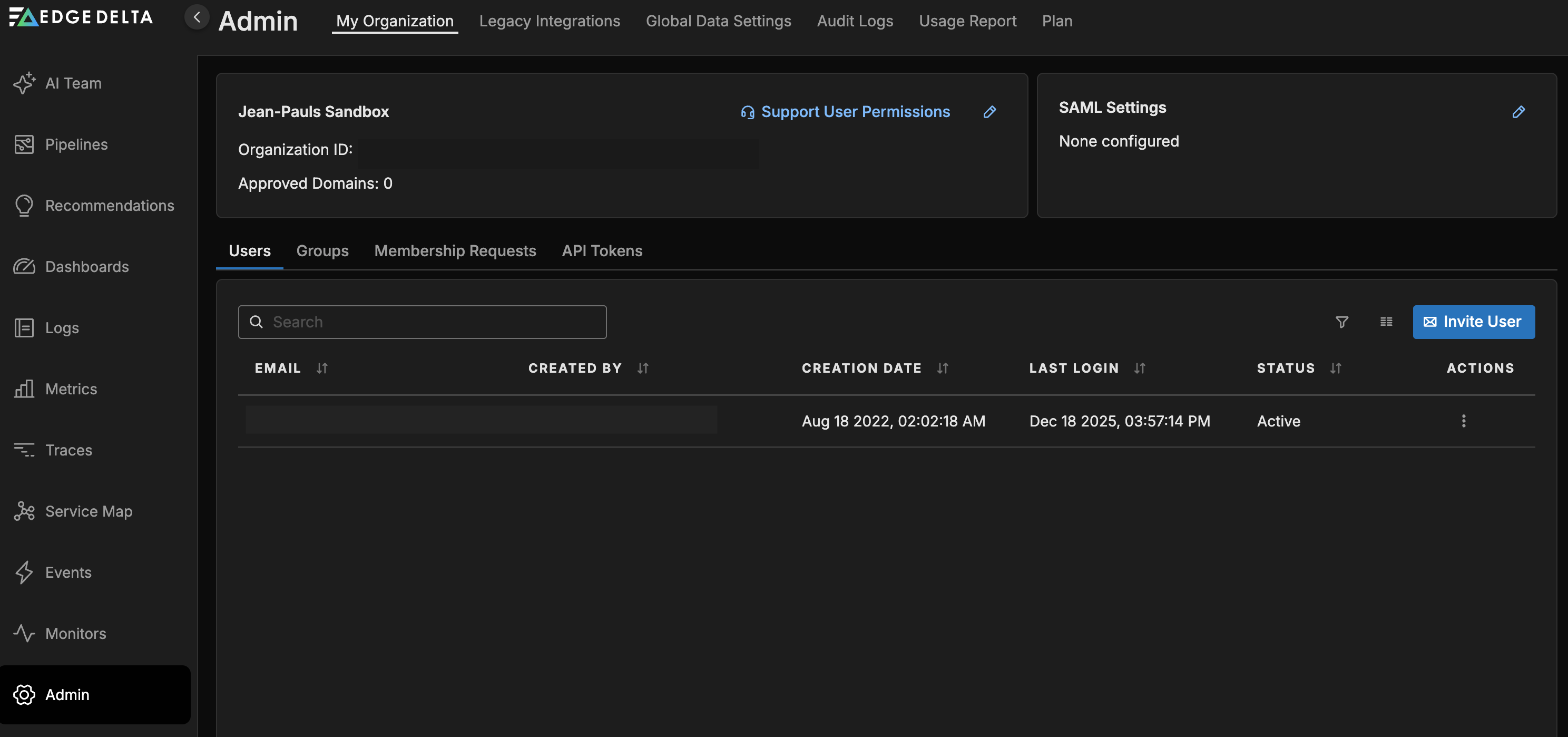

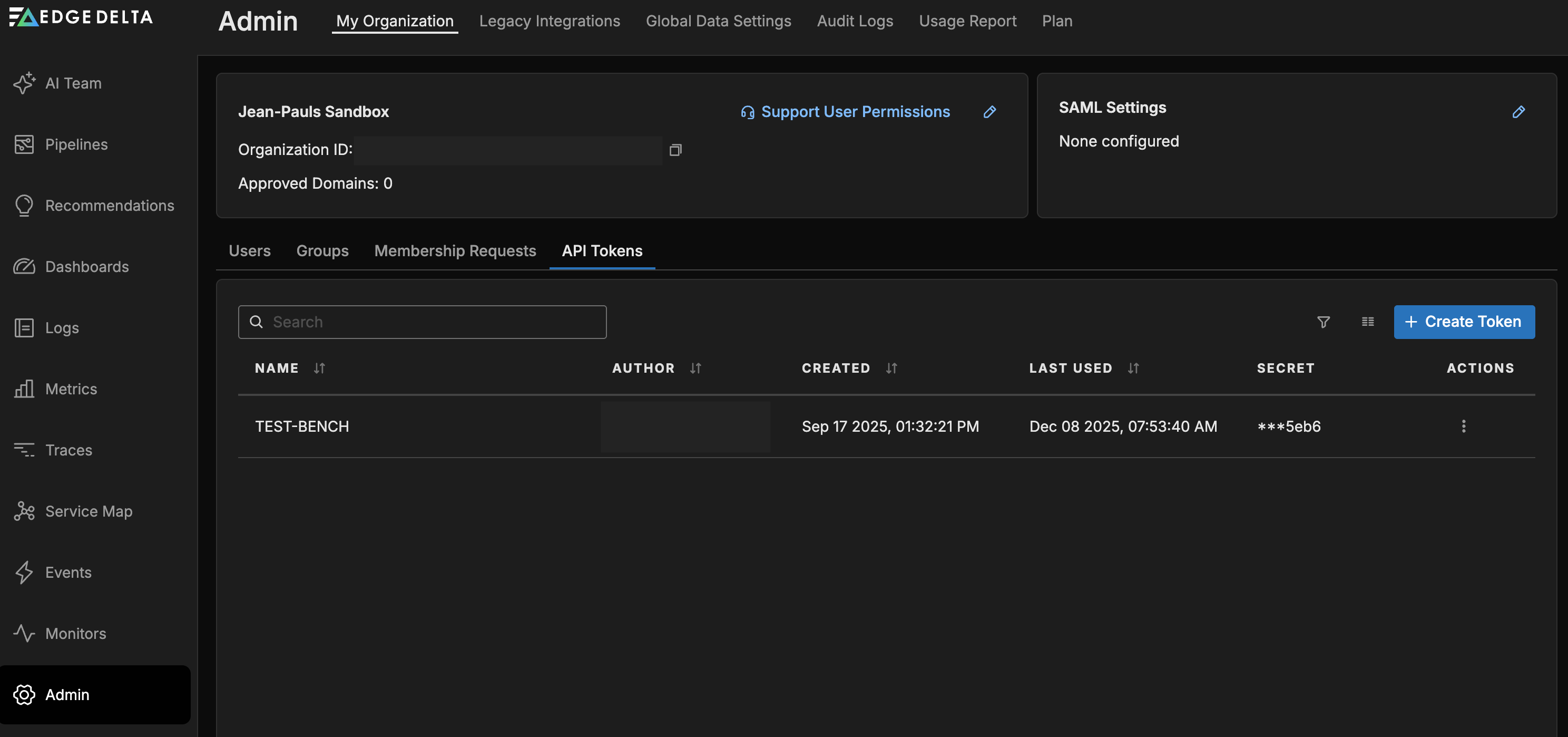

1.1.1. Click Admin and select the My Organization tab.

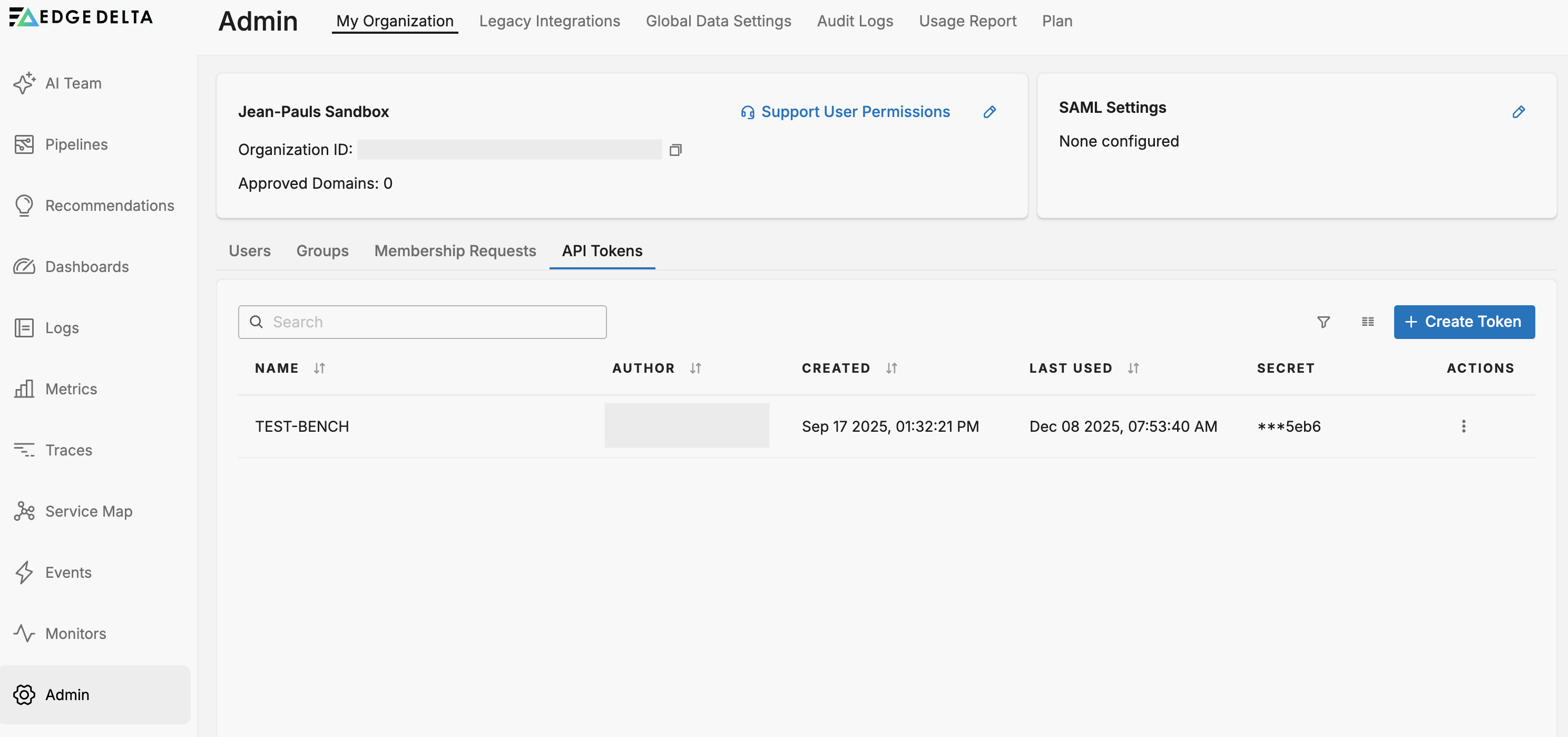

1.1.2. Click the API Tokens tab and click Create Token.

1.1.3. Enter a token name, select the Resource type (Search), Resource Instance (All Current and Future Search) and Access Type (Read).

1.1.4. Click Create.

1.1.5. Copy the access token and store it securely.

1.2 Provision S3 Buckets

Create two S3 buckets that will be accessible by the Hybrid Log Search Service and Edge Delta pipeline. See AWS documentation here.

- Log Bucket: A bucket that will be used to store logs forwarded by the pipeline.

- Disk Bucket: A bucket that will be used by hybrid log search for operations.

2. Deploy Hybrid Log Search

2.1 Create SNS and SQS resources

In this step you create SNS and SQS resources, which are required by Hybrid Log Search’s ingestion service.

2.1.1 Download the Terraform script provided by Edge Delta support and extract it:

tar -xf ed-isolated-log-search-terraform-0.1.5.tar

2.1.2 Initialize Terraform:

terraform init

2.1.3 Deploy Terraform resources, replacing the placeholders with your values:

terraform apply -var="log_bucket_name=<log_bucket>" \

-var="disk_bucket_name=<disk_bucket>" \

-var="tenant_id=<tenant_id>" \

-var="node_role=<node_role>" \

-var="environment=<environment>" \

-var="k8s_namespace=<k8s_namespace>" \

-var="oidc_provider_arn=<oidc_provider_arn>" \

-var="oidc_provider_url=<oidc_provider_url>"

| Placeholder | Description |

|---|---|

| <log_bucket> | Name of the Log Bucket created in step 1.2. |

| <disk_bucket> | Name of the Disk Bucket created in step 1.2. |

| <tenant_id> | Edge Delta organization ID. |

| <node_role> | EKS cluster nodes’ role. If specified we attach a policy to access created SQS. |

| Environment is used to tag resources in AWS. (Default value: customer-prod). | |

| <k8s_namespace> | Kubernetes namespace that log search service will be installed in (Default value: edgedelta). This must be the same namespace name that is used in helm install. |

| <oidc_provider_arn> | OIDC provider ARN (optional). |

| <oidc_provider_url> | OIDC provider URL (optional). |

Alternatively create a variable file and provide that to Terraform to supply parameters. Example variable file my-variables.tfvars:

log_bucket_name = "<log_bucket>"

disk_bucket_name = "<disk_bucket>"

tenant_id = "<tenant_id>"

node_role = "<node_role>"

environment = "<environment>"

k8s_namespace = "<k8s_namespace>"

oidc_provider_arn = "<oidc_provider_arn>"

oidc_provider_url = "<oidc_provider_url>"

terraform apply -var-file="my-variables.tfvars"

2.2 Create the Secret

Create a Kubernetes secret called ed-hybrid-api-token, replacing the placeholder values:

kubectl create secret generic ed-hybrid-api-token \

--namespace=<k8s_namespace> \

--from-literal=ed-hybrid-api-token="<API_TOKEN>"

| Placeholder | Description |

|---|---|

| <k8s_namespace> | The same namespace that you specified in step 2.1.3. |

| <API_TOKEN> | The API key you created in step 1.1. |

2.3 Deploy the data store and API

In this step you deploy Hybrid Log Search’s data store and the Isolated-Log-Search API in your K8s cluster.

2.3.1 Contact Edge Delta support for the Helm chart. The chart deploys the isolated log search API running in your environment.

2.3.2 Get a values file using the default values from the Helm chart

helm show values ed-isolated-log-search-0.1.5.tgz > values.yaml

2.3.3 Modify the following values:

env_vars.aws_default_region

ed-clickhouse.tenantGroup.tenantGroupID

ed-clickhouse.s3Bucket

ed-clickhouse.s3Region

ed-clickhouse.serviceAccountRoleArn.clickhouse

If the deployment will be in a multiple node cluster for availability and performance, modify the following values:

ed-clickhouse.multiNodeEnabled: true

ed-clickhouse.keeperNodeGroupName: <keeper_node_group_name>

ed-clickhouse.clickhouseNodeGroupName: <clickhouse_node_group_name>

ed-clickhouse.clickhouseTotalNodeCount: <clickhouse_nodes_total_node_count>

Multiple node deployment requires a minimum of 7 nodes: 3 nodes for keeper nodes and 4 nodes for clickhouse nodes. If ed-clickhouse.clickhouseTotalNodeCount is set to 4 the deployment will have 2 write and 2 read replicas for clickhouse. If the node count is higher than 4 for clickhouse, the replicas will be increased according to a ratio of 2 write to 1 read replica. For example, if ed-clickhouse.clickhouseTotalNodeCount is set to 7 the deployment will have total of 7 replicas with 3 write and 4 read replicas each in a separate node.

2.3.4 Install the chart, passing in the updated values file:

helm install ed-log-search ./ed-isolated-log-search-0.1.5.tgz \

--atomic --timeout 30m0s \

-n <k8s_namespace> --create-namespace \

--dependency-update \

-f values.yaml

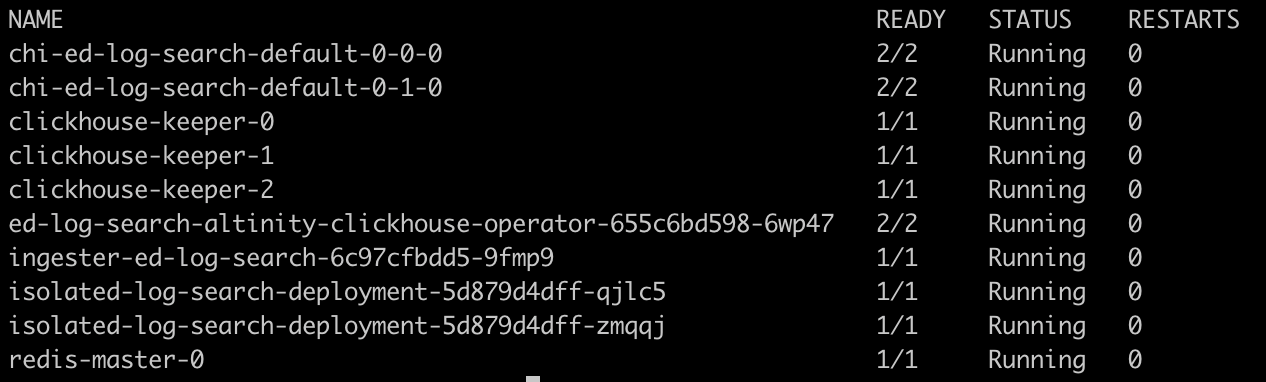

After the deployment, check if the pods are up and running in the deployment namespace.

If some of the pods remain in the “Pending“ state:

- Check if there is enough resources within the cluster

- Check if EBS addon is configured and Persistent Volume Claims are created

3. Deploy Ingress for the Log Service (optional)

Optionally, a Kubernetes ingress resource can be installed in the cluster to secure and manage access to the Hybrid Log Search service.

3.1 Modify this example ingress resource YAML based on your configuration and save it as ingress.yaml:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: isolated-log-search-alb-internal-ingress

namespace: edgedelta

annotations:

kubernetes.io/ingress.class: alb

alb.ingress.kubernetes.io/listen-ports: '[{"HTTP": 80}, {"HTTPS":443}]'

alb.ingress.kubernetes.io/ssl-redirect: '443'

alb.ingress.kubernetes.io/scheme: internal

alb.ingress.kubernetes.io/ssl-policy: ELBSecurityPolicy-TLS13-1-2-2021-06

alb.ingress.kubernetes.io/target-type: ip

alb.ingress.kubernetes.io/tags: Environment=customer-prod

alb.ingress.kubernetes.io/healthcheck-path: /probes/live

alb.ingress.kubernetes.io/healthcheck-port: '4444'

spec:

rules:

- host: customer-isolated-test-env.edgedelta.net

http:

paths:

- path: /*

pathType: ImplementationSpecific

backend:

service:

name: isolated-log-search-service

port:

number: 4444

3.2 Deploy the ingress:

kubectl -n <k8s_namespace> -f ingress.yaml

Host protocol should be HTTPS to run with Edge Delta portal in secure way.

3.3 Instruct Edge Delta support to update the API configuration with the host address.

4. Deploy Edge Delta Pipelines

In this step you deploy Edge Delta pipelines with a hybrid log search configuration:

version: v3

settings:

tag: my-hybrid-search-agent

log:

level: info

nodes:

- name: kubernetes_logs

type: kubernetes_input

include:

- k8s_namespace=edgedelta

- k8s_namespace={NAMESPACE_TO_COLLECT_LOGS}

auto_detect_line_pattern: true

boost_stacktrace_detection: true

enable_persisting_cursor: true

- name: isolated_s3_archiver

type: s3_output

role_arn: {ROLE_ARN}

bucket: {LOG_BUCKET_NAME}

region: {REGION}

disable_metadata_ingestion: true

flush_interval: 1m

max_byte_limit: \"5MB\"

path_prefix:

order:

- Year

- Month

- Day

- Hour

- tag

- host

format: {ORG_ID}/%s/%s/%s/%s/%s/%s/

links:

- from: kubernetes_logs

to: isolated_s3_archiver